Building with transcripts: Search, indexing, display and downstream Integrations

Transcript search explained: learn how indexing, timestamps, display, and integrations make audio and video searchable, accurate, and easy to use at scale.

Transcript search transforms audio and video content from unstructured data into searchable knowledge bases, enabling you to find specific words or phrases and jump directly to the moment they were spoken. This capability turns hours of recordings into instantly accessible information—whether you're searching customer calls for pricing discussions, meeting recordings for action items, or podcast archives for specific topics.

Building effective transcript search requires understanding four critical components: accurate speech-to-text conversion with precise timestamps, smart indexing strategies that balance performance with search precision, thoughtful result display that provides context and navigation, and strategic integrations that connect search insights to your existing business workflows. Each component presents unique technical challenges that determine whether your transcript search becomes a trusted tool or a frustrating experiment.

How does transcript search work?

Transcript search is the ability to find specific words or phrases within written versions of audio and video content. This means you can search through meeting recordings, customer calls, or podcast episodes just like searching a document—but with the added power to jump directly to the moment those words were spoken.

Here's how the process works: speech-to-text converts your audio into written text, an indexing system organizes that text for fast searching, a search engine finds matches when you query, and results display with clickable timestamps that take you to the exact moment in the original recording. Think of it like having a super-powered Ctrl+F for any audio or video file.

But there's a catch—if your transcript is wrong, everything else fails. Poor transcription accuracy means searching for "quarterly revenue" might miss instances where it was transcribed as "courtly avenue." This is why accurate speech-to-text forms the foundation of any transcript search system.

The core pipeline follows these steps. First, audio gets processed through speech recognition models that convert speech-to-text while preserving crucial metadata like timestamps and speaker labels. Then, indexing systems organize this text data for lightning-fast retrieval. When you search, the system queries the index and returns matching segments with their original timestamps intact. Finally, you can click any result to jump directly to that moment in your audio or video.

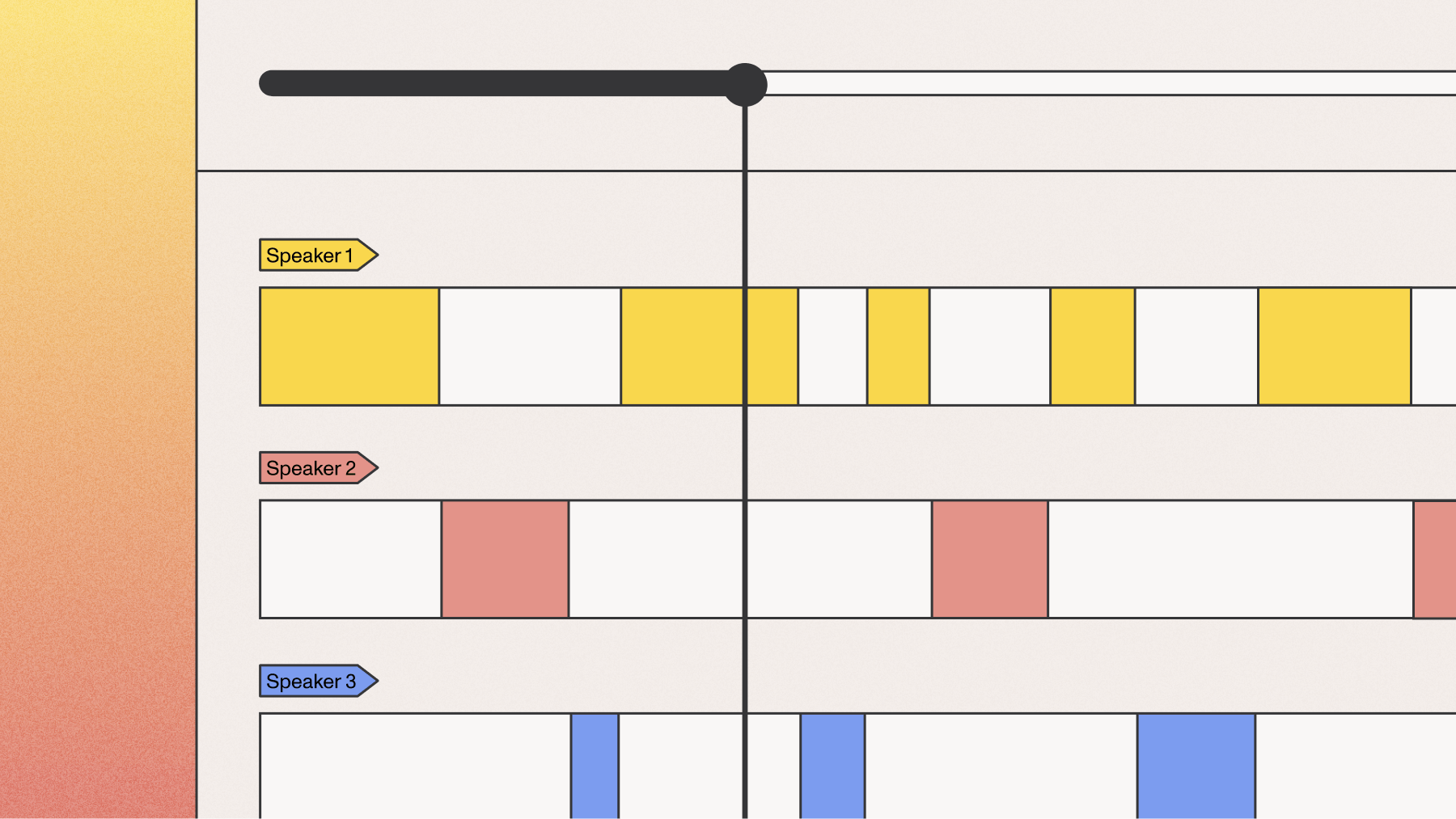

Modern speech recognition doesn't just capture words—it records when each word was spoken down to the millisecond, identifies different speakers through diarization, and assigns confidence scores to indicate transcription reliability. These elements make the difference between basic transcript search and genuinely useful conversation intelligence.

Indexing strategies for transcript search

Once you have accurate transcripts, you need to organize them so searches return results in milliseconds instead of minutes. Indexing is like creating a detailed map of your transcript content—it tells the search engine where to find every word without scanning through entire files.

You have two main indexing approaches to choose from. Document-based indexing treats each full transcript as one searchable unit, similar to how search engines handle web pages. Segment-based indexing breaks transcripts into smaller pieces—maybe 30-second chunks or individual speaker statements—and indexes each piece separately.

The choice matters more than you might think. Users don't just want to know a word exists somewhere in an hour-long recording; they want to jump to the exact moment it was spoken.

Index structure and schema design

Your index schema determines what users can search for and how fast they'll get results. Every indexed segment needs certain essential fields: the transcript text itself, precise start and end timestamps, speaker identifiers, and transcription confidence scores.

Here's a practical schema structure:

- text: The actual words spoken in this segment

- start_time: When this segment begins (stored in milliseconds)

- end_time: When this segment ends

- speaker_id: Which person is speaking

- confidence: How reliable the transcription is

- file_id: Which original recording this comes from

The challenge lies in balancing precision with performance. Indexing every single word with its timestamp creates massive indexes but enables perfect accuracy. Indexing larger chunks keeps indexes manageable but loses the precision users expect.

Real-time vs. batch indexing

Real-time indexing adds new transcript segments to your search index as they're created during live transcription. This approach works great when you need immediate searchability—like during live meetings where participants want to search recent discussions right away.

Batch indexing waits until transcription completes, then processes everything at once. This simpler approach handles recorded content efficiently and allows for better optimization since you're working with complete data sets.

Choose real-time when immediate access trumps everything else. Choose batch when you can wait a few minutes for better performance and simpler operations.

How to display transcript search results

Finding matches is only half the battle—you need to show results in a way that makes sense to users. The best transcript search interfaces provide enough context around each match so users understand what they're looking at before clicking.

Context windows are crucial because conversation snippets often need surrounding dialogue to make sense. A search result showing just "approved" tells you nothing useful. But showing "The budget increase was approved by the finance committee after Sarah's presentation" gives you everything you need.

You have several context approaches:

- Sentence-based: Shows the complete sentence containing your search term

- Time-based: Displays a fixed duration around the match, like 10 seconds before and after

- Speaker turn-based: Shows the entire statement from the person who said the matching words

Each approach serves different needs. Sentence-based works for quick scanning. Time-based provides consistent preview lengths. Speaker turn-based helps you understand complete thoughts and decisions.

Timestamps and navigation

Every search result needs a clickable timestamp that jumps directly to that moment in your audio or video. This requires tight integration between your search system and media player—clicking "1:23:45" should start playback exactly when that word was spoken, not somewhere vaguely nearby.

Word-level timestamps from your transcription enable this precision. Without them, you're guessing where words occur within longer segments.

Mobile interfaces need special consideration since screen space is limited. Consider showing condensed timestamps and using progressive disclosure—show basic results first, then expand for more context when users tap.

Speaker attribution and context

Speaker diarization transforms transcript search from finding words to understanding conversations. Instead of just knowing someone mentioned "budget increase," you can see that the CFO proposed it, the marketing director questioned it, and the CEO approved it.

Display speaker labels prominently in search results using consistent colors or visual markers. This visual consistency helps users quickly identify patterns—like noticing all the customer concerns came from one particular speaker during a support call.

Consider these speaker-enhanced search scenarios:

- Finding everything a specific executive said about company direction

- Identifying when customers mentioned pricing concerns

- Tracking how different team members contributed to project discussions

Downstream integrations

Transcript search becomes truly powerful when it connects to your existing business systems. These integrations transform isolated search results into actionable business intelligence that flows through your organization.

The most valuable integrations connect transcript search to CRM systems, analytics platforms, and workflow automation. Each serves different business needs and requires different technical approaches.

Common integration patterns include:

- Webhooks: Push search insights to other systems in near real-time

- API polling: Regularly check for new transcript matches and update connected systems

- Direct database access: Embed transcript search directly into custom applications

- Message queues: Handle high-volume processing with reliable delivery

API design for transcript search

Well-designed APIs make transcript search integration straightforward for developers. Your search endpoint should accept the search query, optional filters for speakers or time ranges, and pagination controls for large result sets.

A typical search request includes the search terms as the main parameter, filters for speaker ID or date range, pagination settings with offset and limit, and response format preferences. The response should return matched segments with precise timestamps, surrounding context for each match, clear speaker attribution, and metadata like confidence scores.

Rate limiting and authentication matter more than you might expect. Transcript search can be resource-intensive, especially semantic search across large archives. Implement reasonable limits while ensuring legitimate users get responsive service.

Common integration scenarios

Customer support systems benefit enormously from transcript search integration. Support agents can instantly search previous calls with the same customer, finding past issues and resolutions without making customers repeat themselves. When integrated with your CRM, searches automatically scope to the current customer's history.

Meeting platforms use transcript search to make recordings actionable rather than just archived. Instead of sharing hour-long recordings, you can share specific moments where decisions were made or action items assigned.

Compliance monitoring relies on transcript search to identify regulatory issues across thousands of calls. Financial services search for unauthorized investment advice. Healthcare organizations monitor for privacy violations. These integrations often trigger automated workflows—flagging calls for review or generating compliance reports.

Building production transcript search

Taking transcript search from prototype to production requires addressing performance, scale, and reliability challenges. A system that handles dozens of transcripts smoothly might collapse under thousands without proper planning.

Performance starts with your indexing strategy. As transcript volume grows, search latency can increase dramatically if you're not prepared. Consider index sharding to distribute data across multiple nodes and implement strategic caching for frequently searched terms.

Monitor these key metrics to maintain system health:

- Search response time: How long queries take from request to results

- Indexing lag: Time between transcription completion and searchability

- Query throughput: How many searches per second your system handles

- Storage growth: Index size as transcript volume increases

But here's what catches most teams off guard: production transcript search lives or dies on transcription quality. Poor audio quality, overlapping speakers, or domain-specific terminology can devastate transcription accuracy. When accuracy drops, users lose trust in search results and stop using the feature.

Current best practices for improving transcription quality for search involve not just selecting a model, but also using features like `keyterms_prompt` and the `prompt` parameter available with Universal-3 Pro Streaming model. These features allow developers to boost the accuracy of specific domain terms, names, and jargon, which is critical for a reliable search function.

Final words

Effective transcript search transforms audio and video from unstructured data into searchable knowledge bases by following a clear technical path: accurate speech-to-text conversion with precise timestamps, smart indexing strategies that balance performance with precision, thoughtful result display that provides context and navigation, and strategic integrations that connect insights to business workflows.

AssemblyAI's speech recognition models provide the accurate transcripts and word-level timestamps that production search systems require, handling diverse speakers and challenging audio conditions while delivering the reliability that makes transcript search a trusted tool rather than a frustrating experiment.

Frequently asked questions

How accurate does speech-to-text need to be for effective transcript search?

You need at least 85% transcription accuracy for basic transcript search functionality, but 95% or higher accuracy is required for production systems where users depend on search results. Poor transcription accuracy directly impacts search precision and user trust.

What's the difference between searching transcripts and searching regular documents?

Transcript search requires temporal navigation—users need to jump to specific moments in audio or video, not just find text matches. This means your search system must preserve and surface word-level timestamps alongside text content.

Can transcript search work with real-time streaming audio?

Yes, but it requires incremental indexing that adds new transcript segments as they're generated during live transcription. Buffer segments for a few seconds before indexing to balance search freshness with system performance.

How do I handle transcript search across multiple languages?

Use speech recognition models that support your target languages and implement language detection to route audio to appropriate transcription models. Index each language separately or use universal search indexes that can handle multilingual content.

What storage requirements should I plan for transcript search indexes?

Plan for search indexes that are roughly 20-50% the size of your original transcript text, depending on your indexing strategy and metadata storage. Word-level timestamp storage significantly increases index size but enables precise navigation.

How does speaker diarization improve transcript search accuracy?

Speaker diarization lets you search for what specific people said rather than just finding words anywhere in a conversation. This context dramatically improves search relevance, especially for meeting analysis and multi-participant calls.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.